Most AI projects fail to deliver measurable business impact. Only 20% of AI initiatives achieve ROI, with just 2% truly transforming organizations. A clear, strategic, step by step approach is essential for success. This guide equips leaders with a practical AI integration roadmap.

Table of Contents

- Prerequisites: What You Need Before Starting AI Integration

- Developing A Tailored AI Roadmap And Strategy

- Implementation Steps: Building And Deploying AI Agents

- Governance And Ethical Considerations In AI Deployment

- Common Mistakes And Troubleshooting

- Expected Outcomes, Timelines, And Success Metrics

- Explore AlbTech Solutions For AI Integration Success

- Frequently Asked Questions

Key takeaways

| Point | Details |

|---|---|

| Prerequisites matter | Strong data quality, AI skills training, and cloud infrastructure are essential before starting integration. |

| Strategic roadmaps boost success | A tailored AI roadmap aligned to business goals increases project success rates from 20% to 60%. |

| Human oversight is critical | Human in the loop models ensure AI outputs remain accurate and trustworthy in 2026 deployments. |

| Governance reduces risk | Clear ethical frameworks and policies build stakeholder confidence and regulatory compliance. |

| Measurable results take time | Expect efficiency gains of up to 40% within 6 to 18 months of deployment. |

Prerequisites: what you need before starting AI integration

Before diving into AI implementation, your organization needs solid foundations. Most failures happen because leaders skip these critical prerequisites.

Start with role-based AI skills development across your teams. Organizations with role-based AI skills development report faster and more scalable AI adoption. Your finance team needs different AI capabilities than your operations staff.

Data quality determines AI model accuracy. High-quality, well-labeled data is foundational for AI model training and successful integration. Clean your data sources first. Remove duplicates, fix errors, and establish consistent labeling standards.

Infrastructure requirements:

- Cloud based platforms that scale with growing AI workloads

- Secure data storage with proper access controls

- API connectivity between existing systems

- Computing resources sufficient for model training and inference

Organizational alignment separates successful projects from failures. Secure executive sponsorship early. Define clear ownership for AI initiatives. Build cross functional teams that understand both business processes and technology capabilities.

Pro Tip: Complete an AI readiness assessment before committing resources to identify gaps in data, skills, or infrastructure that could derail your project.

Your technology stack matters less than your organizational readiness. Companies with strong foundations see results in months rather than years. Review AI automation case studies to understand how proper preparation accelerates outcomes.

Developing a tailored AI roadmap and strategy

A structured roadmap transforms vague AI ambitions into executable plans. Only 20% of AI projects achieve ROI without a roadmap. Your strategy needs seven interconnected workstreams.

Create your roadmap using these components:

- Technology architecture defining your AI platform and tools

- Data strategy covering collection, quality, and governance

- Capability building through training and hiring

- Use case prioritization based on business impact

- Governance framework for ethical deployment

- Change management preparing teams for new workflows

- Measurement systems tracking ROI and adoption

Prioritize use cases carefully. Look for processes that are repetitive, data rich, and currently consume significant manual effort. Deploying AI incrementally with reusable use cases accelerates learning and maximizes ROI. A customer service chatbot you build can be adapted for internal IT support.

Set measurable goals for each initiative. Define success metrics before deployment. Track cost reduction, time savings, error rates, and user adoption. Vague objectives like "improve efficiency" lead to disappointment.

Your roadmap should span 12 to 24 months with quarterly milestones. Break large projects into smaller pilots that deliver value quickly. Each success builds momentum and organizational confidence.

Key roadmap elements:

- Quick wins demonstrating value within 90 days

- Medium complexity projects building on initial learnings

- Transformational initiatives requiring broader organizational change

- Continuous improvement cycles refining deployed solutions

Include governance policies from day one. Define who approves AI model deployments. Establish review processes for output quality. Create escalation paths when AI systems produce unexpected results.

Explore AI resources and strategies to refine your approach. Consider AI automation services if internal expertise is limited. Browse the AI tools collection to identify platforms aligned with your roadmap.

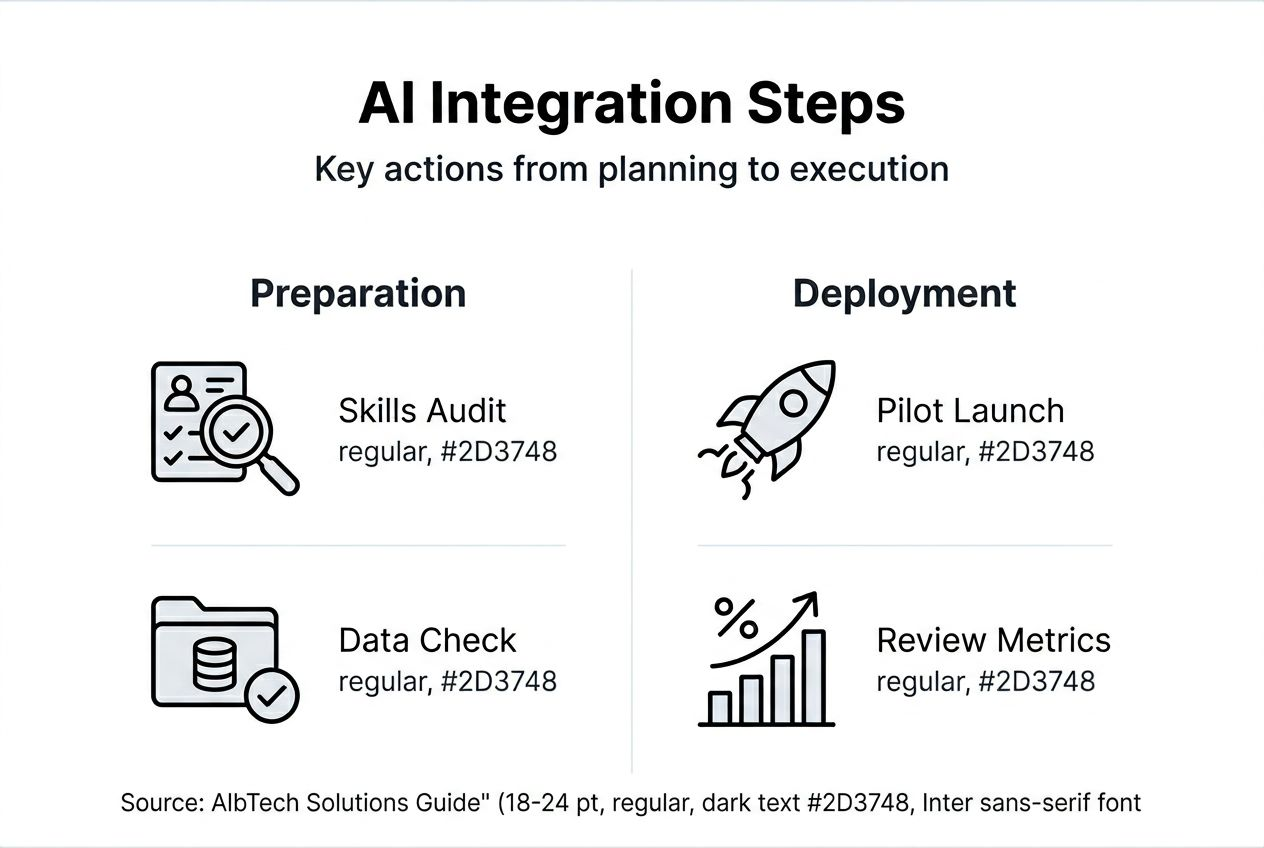

Implementation steps: building and deploying AI agents

Execution separates plans from results. Follow these implementation steps to deploy AI agents that deliver measurable business value.

-

Define specific use cases tied to business pain points. Document current process steps, time requirements, and error rates. Identify where AI can remove bottlenecks or improve accuracy.

-

Prepare your data for model training. Clean historical records. Remove personally identifiable information if needed. Label datasets with domain expert input to ensure accuracy.

-

Train and fine tune models with iterative testing. Start with pre trained models when possible to accelerate development. Validate outputs against known correct results. Involve business users in reviewing model predictions.

-

Integrate AI agents within existing workflows. Build API connections between your AI platform and business systems. Design user interfaces that feel familiar to your teams. Ensure seamless handoffs between AI and human workers.

-

Implement human oversight mechanisms. Human in the loop approaches remain essential in 2026 to manage AI risks and productivity. Configure review queues for high stakes decisions. Set confidence thresholds that trigger human review.

Deployment best practices:

- Start with pilot groups of 10 to 20 users

- Gather feedback weekly during the first month

- Monitor error rates and user satisfaction daily

- Iterate quickly based on real world performance

- Scale gradually after proving pilot success

Pro Tip: Schedule daily standups during the first two weeks of deployment to catch issues fast and maintain team confidence.

Document everything. Record model performance metrics, user feedback, and system configurations. This documentation accelerates future AI projects and helps troubleshoot issues.

Partner with experts for complex deployments. AI agents development services provide specialized expertise. Managed AI support ensures your systems run smoothly post deployment.

Governance and ethical considerations in AI deployment

Governance frameworks protect your organization from AI risks while building stakeholder trust. Establishing AI governance policies early reduces regulatory and ethical risks and improves compliance.

Create clear AI policies aligned with federal regulations. The 2026 AI Executive Orders require transparency in automated decision making for government contractors. Industry specific regulations may impose additional requirements. Document how your AI systems make decisions and maintain audit trails.

Train staff on responsible AI principles:

- Fairness in model predictions across demographic groups

- Transparency in how AI systems reach conclusions

- Accountability for AI outputs and decisions

- Privacy protection for customer and employee data

- Security measures preventing AI system manipulation

Implementing responsible AI through leadership moves improves trust and operational alignment. Assign an AI ethics officer who reviews new deployments. Form a cross functional AI governance committee meeting monthly.

Monitor AI outputs continuously. Test for bias in model predictions quarterly. Review edge cases where AI performs poorly. Update training data to address identified gaps. Transparency directly links to increased AI acceptance and reduces stakeholder distrust.

"Governance is not about slowing down AI adoption. It's about deploying AI systems that stakeholders trust and that deliver consistent value over time."

Mitigate compliance risks proactively. Document data sources and model training processes. Implement version control for AI models. Create rollback procedures for underperforming deployments. Maintain records of governance committee decisions.

Build governance into your culture. Celebrate teams that identify AI risks early. Reward transparency over hiding problems. Make governance reviews a standard part of your deployment process.

Assess your AI governance readiness to identify policy gaps. Review AI governance policies from regulatory bodies. Study responsible AI practices from industry leaders. Prioritize transparency in AI to build long term stakeholder confidence.

Common mistakes and troubleshooting

Most AI integration failures follow predictable patterns. Recognizing these pitfalls helps you avoid costly mistakes.

Lack of a clear vision causes up to 60% of AI project failures. Leaders launch initiatives without defining success metrics or business objectives. Teams build impressive technology that solves no real problem. Fix this by documenting specific outcomes before starting development.

Neglecting training stalls progress. Skipping capability building stalls AI adoption and program success. Your teams cannot leverage AI tools they don't understand. Invest in continuous learning programs tailored to each role.

Common integration mistakes:

- Overestimating AI capabilities and underestimating implementation complexity

- Deploying without sufficient testing in production like environments

- Ignoring user feedback during pilot phases

- Failing to plan for ongoing model maintenance and retraining

- Choosing technology before understanding business requirements

Overreliance on fully autonomous AI creates problems. Current AI systems still make errors, especially on edge cases. Build human oversight into critical workflows. Configure confidence thresholds that trigger manual review. Train staff to identify when AI outputs need verification.

"The best AI implementations augment human capabilities rather than attempting full automation. This balanced approach reduces risk while maximizing efficiency gains."

Ignoring governance invites ethical and practical issues. Models trained on biased data produce discriminatory outputs. Systems lacking transparency erode stakeholder trust. Unmonitored AI deployments drift in accuracy over time. Implement governance frameworks from project inception.

Troubleshooting steps when projects struggle:

- Revisit business objectives and validate use case selection

- Audit data quality and expand training datasets

- Increase human oversight temporarily while addressing accuracy issues

- Gather detailed user feedback to identify friction points

- Bring in external expertise for fresh perspectives

Complete an assessment to avoid AI pitfalls specific to your organization. Review AI project failure statistics to understand common causes. Prioritize AI skills development to build internal capabilities.

Expected outcomes, timelines, and success metrics

Realistic expectations prevent disappointment and maintain stakeholder support. AI projects typically require 6 to 18 months for measurable impact. Your timeline depends on use case complexity, data readiness, and organizational change management.

Expect these phases:

- Months 1 to 3: Planning, data preparation, and pilot development

- Months 4 to 6: Pilot deployment and initial testing with small user groups

- Months 7 to 12: Refinement based on feedback and scaled deployment

- Months 13 to 18: Optimization and measurement of full business impact

Efficiency gains appear incrementally. Organizations reduce manual processing time by up to 40% after AI integration. Early wins might save 10 to 15% of time in specific workflows. Gains compound as you optimize models and expand deployment.

| Success Metric | Target Range | Measurement Frequency |

|---|---|---|

| Cost reduction | 20% to 40% | Quarterly |

| Time savings | 30% to 50% | Monthly |

| Error rate improvement | 50% to 70% decrease | Weekly |

| User adoption | 80%+ active usage | Daily during rollout |

| ROI achievement | Positive within 12 months | Quarterly |

Track both quantitative and qualitative metrics. Measure hard numbers like processing time and error rates. Gather user satisfaction through surveys and interviews. Monitor adoption rates to identify resistance early.

Key performance indicators:

- Transaction volume processed by AI versus manual methods

- Average handling time per task before and after AI deployment

- Customer satisfaction scores for AI assisted interactions

- Employee satisfaction with AI tools and workflows

- System uptime and reliability percentages

Incremental deployments accelerate learning. Small pilots generate insights that improve subsequent phases. Each iteration builds organizational confidence and technical capabilities. Quick wins create momentum for larger transformational projects.

Document lessons learned continuously. Record what worked, what failed, and why. Share insights across teams to avoid repeating mistakes. This knowledge becomes invaluable for future AI initiatives.

Explore AI success stories from similar organizations. Review AI integration timelines to calibrate expectations. Study AI efficiency improvements achieved by early adopters in your industry.

Explore AlbTech Solutions for AI integration success

Transforming AI strategy into operational reality requires specialized expertise and proven tools. AlbTech Solutions builds custom AI agents that integrate seamlessly with your existing workflows, replacing manual processes with intelligent automation.

Our AI agents development services deliver voice agents, chat agents, and workflow automation tailored to your business requirements. We handle the technical complexity while you focus on strategic outcomes. Workflow automation solutions maximize efficiency gains by connecting AI capabilities across your systems.

Access our curated AI tools marketplace to discover leading platforms that accelerate your integration journey. Every tool is vetted for enterprise readiness and compatibility with modern workflows.

Partner with AlbTech to ensure responsible, scalable, and measurable AI deployment. Our team brings deep expertise in governance frameworks, change management, and technical implementation. We help you avoid common pitfalls while achieving results faster.

Frequently asked questions

How long does AI integration typically take to show results?

Initial AI integration impact typically emerges within 6 to 18 months depending on use case complexity and organizational readiness. Smaller pilot projects often show quick early benefits within 90 days, building momentum for larger initiatives. Expect gradual improvements rather than overnight transformation, with efficiency gains compounding as you optimize and scale deployments.

What are common pitfalls to avoid when integrating AI?

Unclear vision and skipping skills development account for the majority of AI integration failures. Leaders must define specific business objectives and success metrics before starting development. Neglecting organizational training leaves teams unable to leverage new AI capabilities effectively. Ignoring governance frameworks raises ethical risks and regulatory compliance issues that undermine stakeholder trust.

Why is human in the loop important in 2026 AI deployments?

Human in the loop approaches are essential to mitigate risks like hallucinations and security vulnerabilities. Current AI systems excel at pattern recognition but struggle with edge cases and nuanced judgment. Human oversight ensures outputs remain accurate and aligned with business policies. This balanced approach maximizes efficiency while maintaining control over critical decisions.

What data quality standards do I need for successful AI training?

Clean, well labeled, and representative data is foundational for model accuracy. Remove duplicates, fix errors, and establish consistent labeling standards across datasets. Your data must cover diverse scenarios the AI will encounter in production. Include domain expert input during data preparation to capture business context that improves model predictions.

How do I measure ROI from AI investments?

Track specific metrics aligned with your business objectives such as cost reduction, time savings, error rate improvements, and user adoption. Calculate hard dollar savings from reduced manual effort and improved accuracy. Measure soft benefits like employee satisfaction and customer experience improvements. Compare baseline performance before AI deployment against results at 6, 12, and 18 month intervals to quantify impact.